AI Didn’t Kill Cinematography. It Made It Mandatory.

A quick note before we begin: I will be teaching a 90-minute workshop on how to think about motion and move your stories from script to screen using AI during Spikes Asia 2026 Creative Campus event at the National Design Center. I will be joined by Joao Flores, the Executive Creative Director at Monks.

If you are a brand or agency looking to leverage AI for ideation and visual storytelling (static or motion), send me a note, and let’s see how we could work together.

Something curious is happening in AI filmmaking right now.

The conversation is getting louder — but also narrower.

Every week, there is a new demonstration. A new model. A new benchmark. Someone posts a side-by-side comparison. Someone else declares that a threshold has been crossed. The tools are evolving in public, and the industry is responding exactly as industries always do when a technology accelerates quickly: by focusing almost entirely on the technology itself.

Look at this model. Look what it can generate. Look how realistic this is. Look how fast this renders.

The vocabulary of progress has become technical.

But the experience of cinema is not technical. It is perceptual. And somewhere in this widening gap between capability and perception, something important is being overlooked.

AI lowered the cost of images. It did not teach anyone how to compose them.

That distinction is becoming harder to ignore.

The Quiet Assumption Behind AI Filmmaking

There is a subtle belief shaping how people approach generative video tools, and it rarely gets stated out loud.

If the system can create the image, it must also understand how to present it. If the model can generate a scene, it must also know how to stage it. If the output looks cinematic, the cinematic thinking must already be embedded somewhere inside the machine.

This assumption is understandable. For more than a century, cinematic skill was bound to physical equipment. Cameras were heavy, mechanical, expensive, and difficult to operate. Mastery meant controlling hardware. When the physical barriers collapsed, it felt logical that the associated knowledge might collapse with them.

But that is not what happened. The tools became easier. The decisions did not.

AI systems are extraordinarily capable at producing visual material. They are far less capable at deciding why one visual choice should exist instead of another. They do not naturally understand narrative hierarchy. They do not assign emotional weight instinctively. They do not choose visual structure with intention unless that intention is explicitly specified.

Left alone, most generative video tends toward visual neutrality. The images are often striking, but the staging is cautious. The camera hovers, or remains still. The framing is balanced but noncommittal. The environment is coherent but not directional. Color is attractive but episodic. Each clip can feel complete on its own, yet disconnected from everything around it.

The result is a strange aesthetic condition: footage that is visually impressive but psychologically flat.

Nothing is wrong, but nothing is being emphasized either.

And emphasis — more than realism, more than resolution, more than fidelity — is what creates meaning in moving images. Which is why the real skill gap emerging in AI filmmaking is not technical. It is cinematic.

The Craft Gap

The industry is currently over-indexing on tools because tools are visible. They can be demonstrated, compared, upgraded, and measured.

Craft is different. Craft is invisible until it is absent.

What many people are beginning to sense — often without fully articulating it — is that certain dimensions of filmmaking do not emerge automatically from generative capability. They have to be imposed. Designed. Directed. Structured. Maintained.

Three of those dimensions are becoming particularly decisive:

Camera movement

Color continuity

Lens logic and framing

None of them are new. All of them are suddenly central again.

Camera Movement — Motion Creates Meaning

Most AI-generated footage is visually alive but spatially passive.

Things exist within the frame. They look convincing. They may even move internally — characters walking, environments shifting, particles drifting. But the camera itself often remains observational. It records rather than participates.

This seems like a small detail until you remember what camera movement actually does in cinema.

The camera does not simply capture action. It directs attention. It establishes hierarchy. It signals importance. It creates psychological alignment between the viewer and the subject. It determines whether we feel like observers, witnesses, participants, or intruders.

A slow push inward transforms curiosity into intimacy.

A lateral tracking move reveals relationships in space.

An orbit isolates a subject from its environment.

Handheld instability introduces vulnerability or tension.

A restrained drift invites contemplation.

These are not stylistic flourishes. They are narrative instruments.

When camera movement is absent, attention is unguided. When attention is unguided, emphasis disappears. When emphasis disappears, the emotional structure of the scene weakens — even if the image itself remains visually impressive.

A scene without camera intention is just surveillance.

AI systems do not yet possess an intuitive model of cinematic emphasis. They do not automatically move the camera in ways that reveal meaning. They move when instructed to move — and when not instructed, they often default to stillness or minimal motion.

Which means that prompting camera movement is no longer an optional refinement. It is becoming a foundational storytelling skill. Not because the tools are limited, but because narrative emphasis must always be deliberate.

Framing and Lens Logic — Perspective Is Psychological Architecture

Framing is often misunderstood as composition within a rectangular boundary. What is included, what is excluded, and where the subject sits.

But framing begins long before composition. It begins with perspective — and perspective is determined by the lens.

Different focal lengths do not simply alter magnification. They reshape spatial relationships. They alter the perceived distance between objects. They influence emotional proximity. They change how the viewer experiences scale, intimacy, isolation, and environment.

A wide lens places subjects within context, emphasizing spatial immersion.

A long lens compresses distance, isolating subjects from their surroundings.

A 35mm perspective often feels human and immediate.

A 65mm field can feel monumental, architectural, almost ceremonial.

These are not technical characteristics. They are perceptual conditions.

Lens choice is not technical. It is psychological architecture.

This becomes especially important in generative filmmaking because reference images themselves carry implicit lens assumptions. If the visual language of reference material conflicts with the perspective implied in generated sequences, the world begins to feel inconsistent — even if viewers cannot articulate why.

Learning how lenses shape perception is not beginner cinematography. It is not advanced optical theory. It is something in between — what might be called the middle of cinematography. The layer where visual decisions become emotional structures.

That middle layer is where AI filmmakers increasingly have to operate.

Color Grading — Continuity Is Emotional Glue

Generative video systems produce fragments of time. Cinema produces the experience of continuous time. That difference sounds technical, but it is deeply perceptual.

When humans watch a film, we do not consciously evaluate color consistency from shot to shot. But our perceptual system tracks it automatically. Light temperature, contrast structure, saturation relationships — these form a kind of emotional atmosphere that binds moments together into a single coherent reality.

When that atmosphere shifts unpredictably, something subtle fractures. The world feels unstable. The emotional tone resets between shots. Time feels segmented rather than flowing.

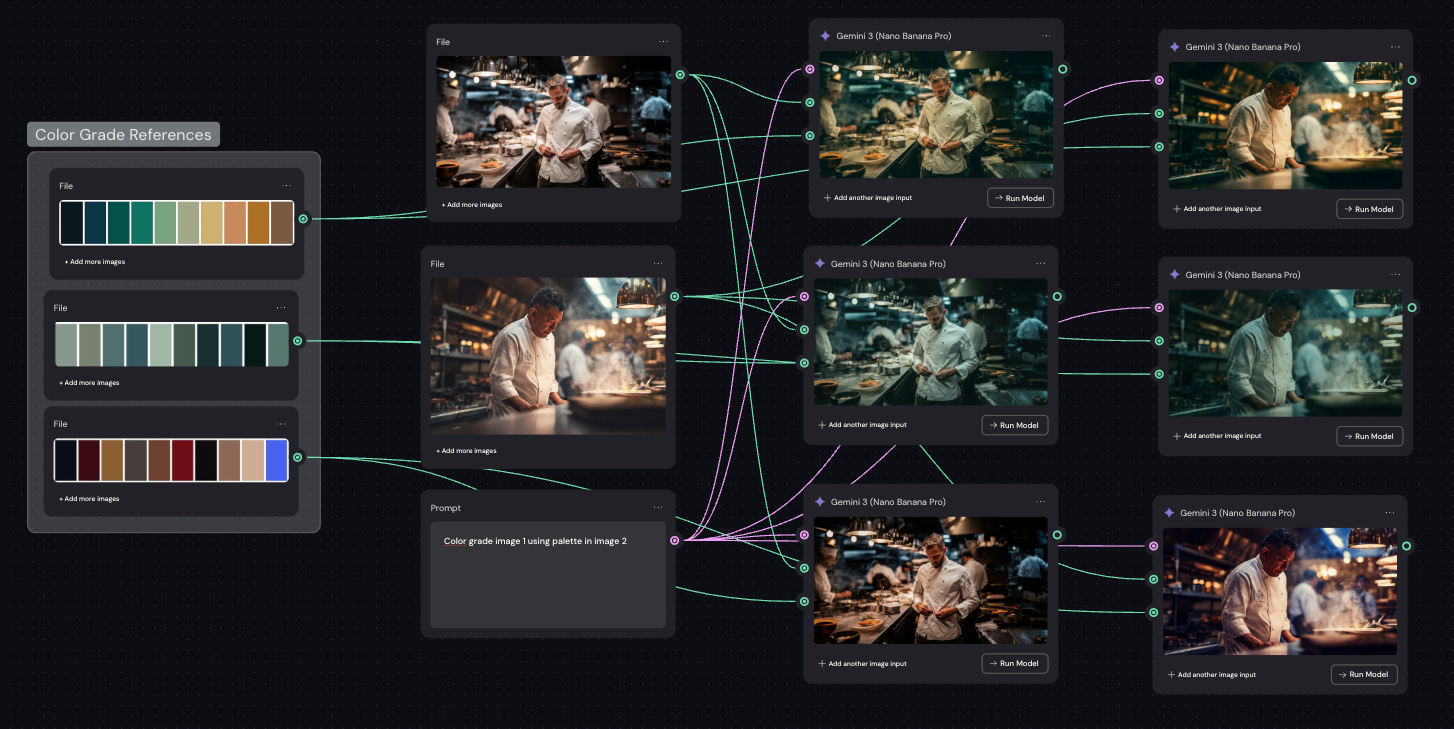

Most AI systems generate clips independently. Each sequence may be internally consistent, but consistency across sequences must be actively constructed. Without deliberate grading, visual continuity becomes accidental rather than structural.

And continuity is not merely aesthetic polish. It is narrative integration.

Color grading is what turns generated moments into a continuous reality.

This is why filmmakers working seriously with generative tools increasingly find themselves learning color — not as a finishing touch, but as a unifying language. It is the mechanism that allows disparate pieces of generated footage to inhabit the same emotional universe.

AI can create images. Grading creates worlds.

*Note: When I create a scene, I try to get as close to the color during prompt testing as possible. But for final grade, I am learning Davinci Resolve, or I bring in a strong colourist when the budget allows.

What Actually Changed

Generative video did not eliminate cinematography. It redistributed it.

For most of film history, cinematographic decisions were executed physically — through equipment, placement, and mechanical control. Today, those decisions are specified conceptually — through prompts, references, and visual systems.

The craft moved upstream.

The competitive advantage is no longer the ability to operate a camera. It is the ability to define what the camera should mean. Which reveals something the current tool-focused conversation often misses: the technology is not selecting for people who know the software best.

It is selecting for people who understand visual intention most deeply.

The Real Divide Emerging Now

Many people will become highly proficient at generating footage. Fewer will become skilled at structuring visual experience.

Those two capabilities are not the same, and the distance between them is widening.

Knowing which model to use is useful. Knowing how attention moves through space is decisive. Knowing how to generate a shot is useful. Knowing why that shot should exist is decisive. Knowing how to produce clips is useful. Knowing how to build a coherent visual world is decisive.

The models will continue to improve. Motion synthesis will become smoother. Temporal consistency will increase. Resolution will rise. Automation will expand. But none of that will replace the need to decide how a viewer should feel when the camera moves, when color shifts, when perspective changes.

That work does not belong to the machine. It never did.

I am not studying cinematography because I want to become a filmmaker in the traditional sense. I am studying it because generative systems make cinematic decisions explicit again. They expose the structure that physical production once concealed behind equipment and process.

And once that structure is visible, it becomes impossible to ignore. Tools generate images. Craft generates meaning.

The models will keep getting better. The question is whether this emerging cohort of filmmakers will.

About RockPaperScissors and Motion Design

RockPaperScissors has been quietly evolving during the past few years, from the design of static imagery via AI, strategy development, training and workshops, to motion-based storytelling. I did not predict that at the beginning of 2026, this would be the most sought after service I am providing my clients.

But needs change, and I am fortunate enough to have worked with clients that are looking to embrace the technology and the opportunity.

As AI has made image creation faster, the need for structure has increased. Scenes still need to work. Movement still needs to feel intentional. Perspective still needs to be designed. Audiences still need to delighted.

That is the work I do. I help teams think through motion before they generate it. I design camera choreography, spatial logic, and visual systems so that what is produced feels coherent and cinematic — not accidental.

If you are experimenting with AI-driven motion, building brand films, or trying to elevate the quality of your visual storytelling, bring me in early.

Motion is easy to generate. Getting it right? Well, that’s a different story.