AI Leadership: Are We Starting from X When We Need Y?

Every few weeks, I come across a post or headline claiming that “AI is killing junior coders.” The idea is always the same: because younger workers no longer have to wrestle with problems line by line, they won’t develop the critical thinking or craft that once defined their role. They’ll lean too heavily on the shortcut, never learning the skill.

It’s a familiar anxiety, and not just in tech. We’ve heard versions of it in advertising, in journalism, in music. Every time a new tool emerges, someone predicts the death of craft.

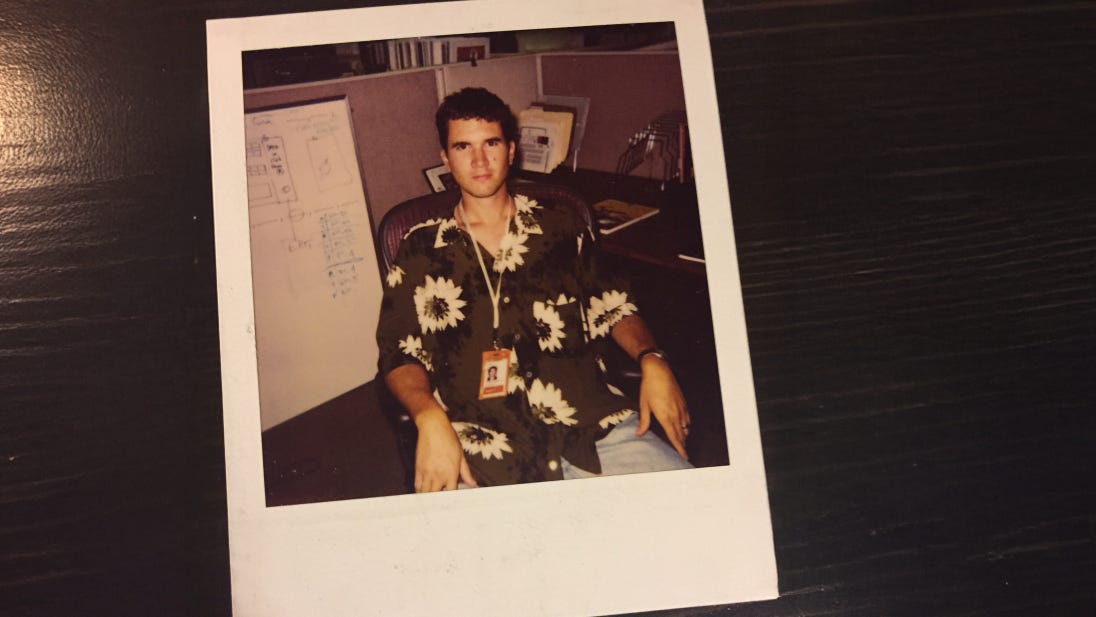

I had never heard about Douglas McGregor’s Theory X and Theory Y until I read a recent post from Emil Rijcken, whom I had the privilege of working with for the first 8 months of RockPaperScissors (hard to believe my little engine that could is now 21 months old). Emil, armed with a PhD in Artificial Intelligence, is a co-founder and leads AI development at Seldon Digital, which has developed a fantastic platform that is an AI design co-pilot that helps you generate ideas based on intent, iterate through your designs with context, and fully explore the problem space. It’s worth noting that Andrei Herasimchuk, one of the founders, was also the guy behind groundbreaking UI/UX work at Adobe and Figma. I owe such a debt of gratitude to Emil and Andrei, as Seldon was RockPaperScissors’ first client.

A Management Theory for the Machine Age

From what I am learning, McGregor was a professor at MIT Sloan in the late 1950s and early ’60s, and wrote The Human Side of Enterprise, a book that quietly revolutionized management thought. His ideas arrived at a time when organizations were grappling with the shift from industrial assembly lines to white-collar, knowledge-based work.

Theory X reflected the prevailing mindset of the industrial age: people are naturally lazy, avoid responsibility, and must be directed, controlled, and threatened to achieve results. Managers operating under X assumed the worst of their employees, and so they built structures of surveillance, rigid rules, and close oversight.

Theory Y was McGregor’s counterpoint. It assumes people are intrinsically motivated, curious, capable of self-direction, and eager to grow if given the right environment. Managers who adopted Y built systems of trust, encouraged initiative, and treated people as sources of ideas, not just units of labor.

McGregor’s genius was not in declaring one theory “true” and the other “false,” but in showing how a leader’s assumptions about human nature shaped the systems they designed. If you believed in X, you created structures that stifled. If you believed in Y, you created conditions that unlocked. And people, inevitably, lived up — or down — to those expectations.

From Assembly Lines to Algorithms

Sixty-four years later, the parallels are striking. The debate around AI and junior talent is, in essence, the same debate McGregor saw in the shift from machines to knowledge work. Do we assume that people, when given a powerful tool, will get lazy? Or do we assume that people, when trusted, will use that tool to accelerate their growth?

The “AI will kill junior coders” narrative is Theory X all over again.

Theory X assumes shortcuts weaken us. It assumes that, given the option, people will always take the easy way out. It assumes the worst.

My own experience says otherwise. My skills have accelerated because of AI, not despite it. I have read more books since I began my AI journey over 4 years ago. And not just books on AI, as they began to appear, but books on culture, journalism, philosophy, and creativity. I have always been a fan of Science Fiction, but now, as I began seeing these imagined worlds begin to take shape, I dove back into them looking for clues about where we might be heading. All of this renewed reading and study inspired me to write a book, To Question is To Answer, partly on AI, but mostly about how I chose to focus on questions design instead of prompt hacks.

From Guardrails to Handcuffs

When I talk with leaders in agencies, brands, and creative organizations, what I hear most often are stories of constraint. Staff are told which tools they may use, how they may use them, and for what ends. I see “task-based agents” being built - systems designed not to explore but to execute narrowly. Workflows engineered to eliminate wandering.

On the surface, this looks efficient. But underneath, it is pure Theory X: architecture based on the belief that people can’t be trusted to think for themselves.

There is, of course, a place for this. Repetitive, mechanical work should be automated. That is where Theory X systems shine. But a dangerous line gets crossed when these structures extend into creative and strategic work. Creativity requires excess. It requires detours, failed experiments, sideways questions. It requires a deep dive into cultural drivers and generational belief systems. And it also needs time to explore and experiment.

When leaders impose X-systems in the realm of creativity, they fail to recognize the fundamental difference between efficiency and originality. Efficiency thrives on narrowing options; originality depends on expanding them. By applying the logic of the assembly line to the work of imagination, they confuse speed with substance.

What gets lost is not just a clever idea here or there, but the conditions that allow ideas to emerge in the first place: the off-topic conversation that sparks a breakthrough, the half-baked prototype that reveals an unexpected direction, the sideways question that reframes the problem entirely. Creativity doesn’t run on compliance. It runs on curiosity. And curiosity, like trust, withers under constant surveillance.

In that sense, Theory X systems don’t just limit productivity. They corrode culture. They train people to color inside the lines, to avoid risk, to treat exploration as a liability. Over time, organizations that default to X will still generate output, but it will be incremental, predictable, and lifeless.

The irony is that these are the very organizations most desperate for innovation, and yet by the way they structure their systems, they ensure it never takes root.

So What Happens to Junior?

There is no denying that AI has changed the work of entry-level coders, copywriters, and designers. Just as the rise of desktop publishing altered the trajectory of design assistants in the 1980s, or nonlinear editing software transformed apprenticeships in film and television, AI is shifting what we expect from the bottom rungs.

But that does not mean juniors are expendable. It means the role itself must evolve.

For too long, junior staff have been treated as shadows. They sat silently in the back of the room. They churned through the low-value, repetitive work that seniors didn’t want. They waited, often for years, for “their turn.” AI has stripped much of that away. And rather than lamenting its disappearance, we should treat it as an opening.

What if junior roles became accelerators rather than apprenticeships? What if the expectation wasn’t that they “pay dues,” but that they contribute perspective from day one? What if we trained them to use AI not as a crutch but as a scaffold for understanding? What if their voices weren’t merely tolerated but actively sought out?

*As I write this, I am also thinking about a call I have scheduled this week with a woman who just graduated with a Masters from the Royal College of Art, following her degree program from the University of Arts London . My recent article caught the attention of her father, who reached out, wondering if I would shine some light on the world his daughter is stepping into. With only internships as her work experience, she will most likely be relegated to a junior designation at a holdco gladiator school (agency), expected to “pay her dues” and be patient until “her time comes.”

We need young voices. Junior voices. Urgently. We need their cultural vantage point, their instincts, their ability to see what the rest of us miss. If we build X-systems that box them out, we don’t just weaken their growth. We weaken our own future.

The Responsibility of Leadership

This is where leadership matters most. The real danger is not that AI itself will erode skills. The real danger is that leaders will design systems that train people to stop thinking.

If you believe people are lazy, you will strip away their choices. If you believe people are motivated, you will give them tools that expand their capacity. That belief — not the technology — will define the culture of your organization.

Older leaders in particular have a role to play here. Many of us came up in Theory X systems: command-and-control hierarchies, rigid approval gates, narrow job scopes. We know how deadening those structures felt. We also remember the liberation when someone finally trusted us, handed us responsibility, or gave us room to try something new. That memory gives us perspective. We can choose to lead differently now.

From X to Y

So the question isn’t whether AI will kill skills. The question is whether leaders will meet this moment with Theory X or Theory Y.

Will we build AI systems to control, or to enable? Will we focus on architecting compliance, or will we prioritize unlocking capability? Will we assume the worst in our people, or trust them to rise?

AI itself is neutral. It really doesn’t give a damn. It can be a crutch, or it can be a catalyst. It can either diminish talent or accelerate it. The choice doesn’t sit with the tool. It sits with the leader and the assumptions they bring to the table.

The story of AI is not the story of machines.

It is the story of what we believe about ourselves. Do we trust our curiosity, or do we fear it? Do we choose to liberate imagination, or do we confine it to the narrowest tasks?

McGregor’s question still echoes six decades later: X or Y? Control or trust? Constraint or possibility?

AI won’t kill skills. But our assumptions might. And in the end, the future of creativity — and perhaps the future of work itself — will rise or fall not on the power of algorithms, but on the courage of leaders to choose Y when it matters most. These are choices. They are reflections of what we believe about human capacity. If we start from X, we will shrink people to fit the system. If we start from Y, we will expand the system to fit their potential.

In a decade, the organizations that thrive won’t be the ones that kept their people on the tightest leash. They’ll be the ones who built cultures of trust, who saw AI not as a cage but as a lever.

Who believed their people could rise higher than the tools themselves.

That is the real leadership test of this moment.

Not So Shameless Plugs.

I write because I like to, and because I believe that the thoughts in my head are shared by, or might influence, some of you reading this, or maybe someone you know.

I earn my living leading RockPaperScissors, working with agencies and brands to solve challenges. I also design and conduct workshops and training, and on occasion, I write things, like To Question is To Answer: How to Think Critically and Thrive in the Age of AI, and Frameworks Reframes: Thinking Models for the AI Age.