I stopped writing and went quiet to rethink how I work. Here's why — and where it's headed.

This is my first long read in a while, not because I had nothing to say, but because I was too busy learning to say it properly.

That’s the thing about writing honestly about AI and creative practice — you can’t fake the reps. You either know what you’re talking about from the inside, or you’re just repackaging someone else’s observations with better vocabulary. I’m not interested in that. So when the learning gets serious, the writing pauses. And for the past three weeks, the learning got serious.

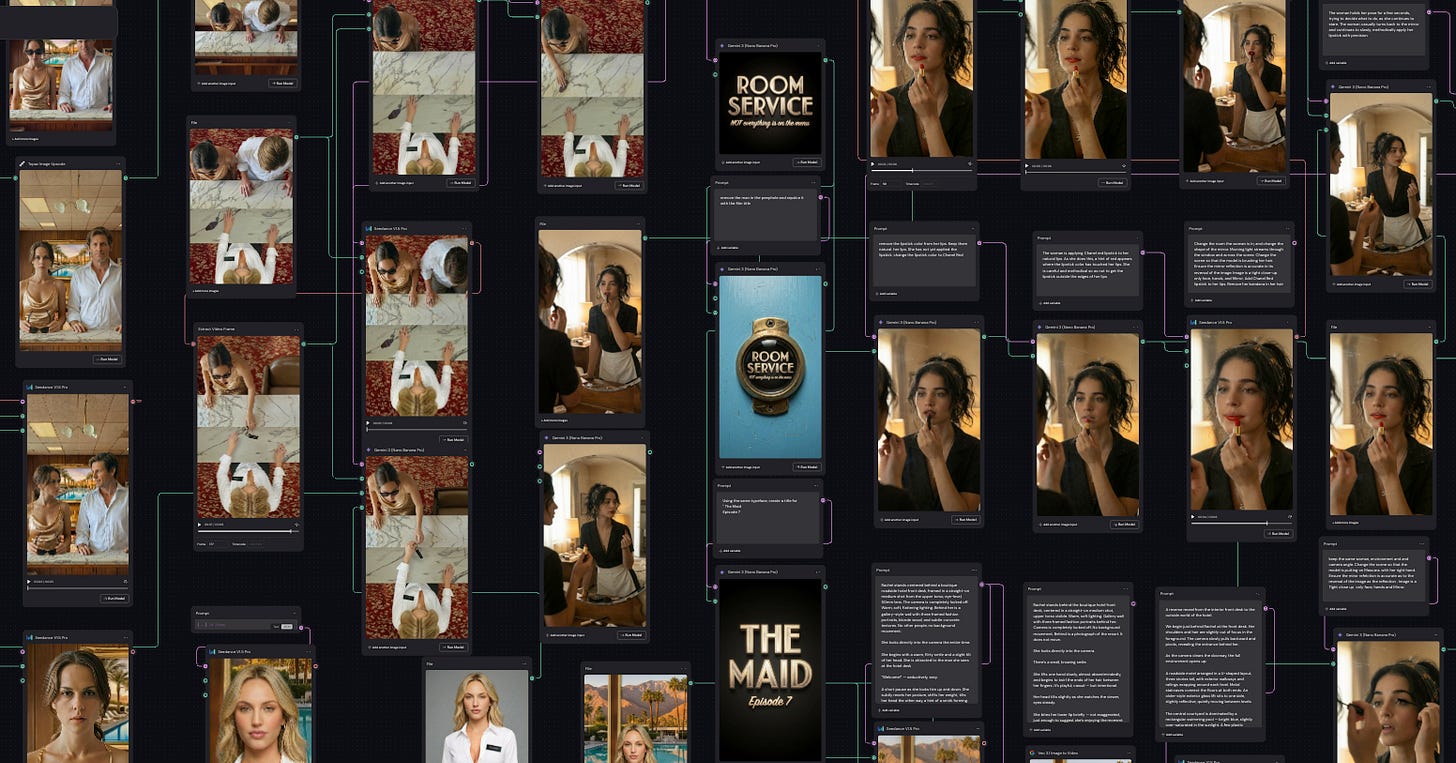

Here’s what I was doing: deep into an AI filmmaking masterclass with Lighthouse AI Academy and Figma Weave, my go-to production platform. I have been running experiments and delving into structured coursework alongside people who dedicate 100% of their professional lives to this craft. Not AI evangelists. Not tech commentators. Filmmakers. Cinematographers. Directors who happen to be operating at the frontier of AI-generated motion. The kind of people who think about frame composition before they think about prompts.

That’s the shift I’ve been chasing — and it’s the one I want to write about today.

From Scene Mechanic to Director

There’s a hierarchy in AI visual production that most people don’t talk about, because most people haven’t gotten past the first level.

Level one is scene prompting. You describe a shot. The AI renders it. You’re happy with the result or you try again. It’s impressive. It’s also, in filmmaking terms, roughly equivalent to choosing a filter on Instagram. You’re responding to what the machine gives you rather than directing what it makes. Most AI visual content being produced right now — the vast majority of it — lives here. It’s reactive. It’s pretty. And it has roughly the same relationship to storytelling that a mood board has to a screenplay.

Level two is the Scene Mechanic — and I’ve written about this before at length, so I’ll keep it tight here. The scene mechanic doesn’t begin with imagination. They begin with function. A story already exists — a brief, a script, a brand narrative — and the mechanic’s job is to diagnose why it isn’t working as a perceptual experience, then tune it until it does. They think structurally. They understand that framing directs interpretation, that rhythm directs bodily response, that the order in which visual information arrives determines how meaning is constructed. It’s not intuition. It’s not artistry. It’s precision work — closer to an engineer listening for the wrong vibration in a system than a painter deciding what colour to use. Most people doing serious AI visual work are here, or getting here. It’s a meaningful upgrade from prompting. But it’s still not directing.

Level three is what I’ve been building toward: working as a director and cinematographer. Thinking in systems rather than scenes. Considering shot language, narrative continuity, character consistency, emotional arc, and genre grammar — before a single prompt is written. The prompt becomes the last thing, not the first. The thinking is the work.

This is not a small upgrade. It’s a different job.

For me, the territory where that upgrade matters most is micro-drama — specifically, vertical micro-drama built for brand storytelling. And that’s where things get genuinely interesting.

Micro-Drama Is Not a Short Film. It’s a Different Form.

A micro-drama isn’t just a film that’s been trimmed down to fit a phone screen. It has its own grammar. Its own pacing. Its own relationship with the viewer.

Vertical format — the 9:16 frame we scroll past a hundred times a day — demands a completely different compositional logic. Faces dominate. Negative space works differently. The edit has to earn attention in the first two seconds or it’s already lost. And the storytelling has to operate on multiple registers simultaneously: emotional hook, narrative tension, and brand integration — all without any of the three elements killing the others.

Room Service. A BIG RED BUTTON / STORYENGINE1 / RockPaperScissors Series

That last part is where most branded content falls apart. Brand integration in traditional production usually means logos and pack shots bolted onto something that was already a story. The brand is an afterthought, wearing a lanyard.

In micro-drama done properly, the brand isn’t placed into the story. The brand is integrated, almost like a character in the story. It has behaviour. It has presence. It carries meaning the way a costume or a location carries meaning — not announced, but felt.

This is the craft problem I find most compelling right now. And it’s one that AI-assisted production is uniquely positioned to solve — not by making things cheaper, but by making the creative space larger. Those are different promises. The second one is the one that matters.

The Hybrid Production Model: Shoot Less. Build More.

Here’s the production idea I’ve been developing — and it’s the one I think will matter most to brands, agencies, and independent creators in the next two years.

Stop thinking of AI as just a production tool. Start thinking of it across the entire workflow, from idea to final output.

The traditional model goes something like this: pre-production (months), production (expensive, complex, weather-dependent, talent-dependent, logistics-heavy), post-production (long, expensive again). The field shoot is the center of gravity. Everything orbits it. And because everything orbits it, you over-invest in it — more shoot days than you need, more coverage than you’ll use, more insurance against the variables you can’t control.

The hybrid model flips that logic.

In the field, you shoot the minimum viable reality. The essential human moments. The performances that can’t be fabricated — because they shouldn’t be. The reference material that grounds the entire project in physical truth: real faces, real light, real locations, real texture. You capture what only cameras in the real world can capture. And then you stop. Cut infield time in half. Maybe more.

Then you move into an AI studio with an AI-enabled workflow or system. Like RockPaperScissors and StoryEngine1.

There, you build on top of those references. You extend the world beyond what the location allows. You generate the establishing shots you couldn’t afford on the day. You expand the environments, add the weather you wanted, and create the scale the budget didn’t permit. The references anchor everything to reality — so the AI-generated work doesn’t float off into the uncanny valley. It’s tethered. It feels like the same world, because the DNA came from the same world.

What you gain is threefold, and it runs simultaneously. Time, because you’re no longer hostage to locations, permits, crew availability, or weather windows for every single shot — the AI studio is always open. Creative vision, because the director’s actual intent is no longer compressed by what the day allowed — it expands instead. And cost, not because you’re cutting corners, but because you’re cutting the right things and building the rest smarter. Fewer shoot days. Smaller on-location crew. More output per dollar. The economics start working in the story’s favour for once.

This isn’t a workaround. It’s a production methodology. And for micro-drama specifically — where the format demands creative precision, where brands need multiple executions, where the economics have to actually work — it may be the model that finally makes brand storytelling at scale genuinely achievable rather than just theoretically desirable.

The end of this article is just the beginning...

I’ve spent most of my career watching brands underinvest in storytelling because the economics didn’t work. Too expensive. Too slow. Too many variables. The ROI conversation always ran out of road before it reached the creative conversation. So the creative compromise became the default. And the default, after thirty-odd years of watching it, is usually mediocre content with a big logo on the end.

The hybrid model changes that math. Vertical micro-drama gives it the format it needs — a form that lives where audiences actually are, that respects the attention economy without surrendering to it, that finally makes the brand-as-character idea practically achievable rather than just a nice thing to say in a brief.

I’ve been quiet for three weeks because I was building the foundation to say this properly. Not as theory. As practice.

The tools are ready. The question now is whether you have a story worth telling with them. Technology democratizes production. It doesn’t democratize vision. That part is still on you.

StoryEngine1 is my productised approach to AI-enabled brand storytelling.

Built for agencies that work with ambitious brands and are ready to move beyond the mood board. If the ideas in this piece resonate, it’s probably worth a conversation.